Introduction

__________________________________________________________

Click here to access this Guide in Portuguese – Guias em Português

__________________________________________________________

Pretesting is the process of bringing together members of the priority audience to react to the components of a communication campaign before they are produced in final form. Pretesting measures the reaction of the selected group of individuals and helps determine whether the priority audience will find the components – usually draft materials understandable, believable and appealing.

Components of a communication campaign that benefit from pretesting include:

- Key benefit and support points

- Messages

- Story boards

- Draft materials

- Name of campaign and logo

- Signature tune/music

- Translated text

- Interpersonal communication activities such as those used by peer educators or field workers

Keep in mind that for social and behavior change communication (SBCC) campaigns and materials to be most effective, they should be tested at several stages of development. In the SBCC process, four types of testing are typically conducted: concept testing, stakeholder reviews, pretesting and field testing. The graphic below demonstrates the relationship between the four types of testing. This guide covers only pretesting.

Why Conduct Pretesting?

Pretesting increases the impact of SBCC materials by determining if what has been designed is suitable for the audience. It can save money, time and energy overall as the resulting material will be effective.

Pretesting should be conducted to gather information from the audience on the basic aspects or elements of the material, including:

Do not skip the pretesting phase. If there are not resources or time to conduct a large-scale pretest, even a small-scale pretest can offer useful insights if it is thoughtfully designed.

Who Should Conduct Pretesting?

A small focused team of key program staff (3-4 people) should develop the plans for pretesting. For the pretest to be most effective, however, it is best to find people most like the priority audience – who are trained in pretesting – to lead the actual pretesting exercises. Having someone who is like the audience will encourage honesty and openness during the pretesting process. Some organizations may consider hiring a research firm to conduct the pretesting.

When Should Pretesting be Conducted?

Pretesting should be completed after concept testing, message design, and materials development, and before components of the communication campaign are finalized, produced and disseminated.

Estimated Time Needed

Completing pretesting typically takes between two weeks and two months depending on the testing method, the objectives of the pretest, the number of campaign elements to be tested, and the number of revisions necessary. If materials or messages require a complete rework, it could take longer.

Learning Objectives

After completing the activities in the pretesting guide, the team will:

- Understand the steps and the stages of testing SBCC materials.

- Define and list the elements of pretesting.

- Know how to choose a pretesting method.

- Know how to conduct a pretest.

Prerequisites

Steps

Step 1: Outline Pretest Objectives

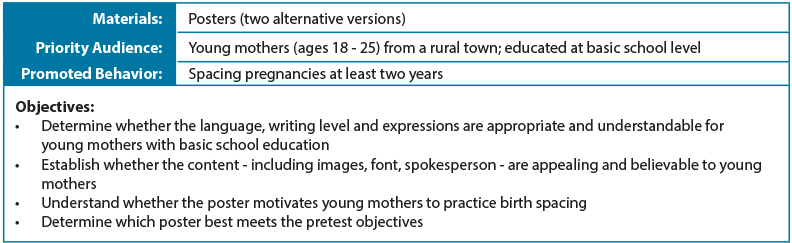

To guide the pretest process, the team should develop a plan with a clear set of objectives for each component or material being tested. The objectives describe the aims of the pretest and the information to be gathered. Start by reviewing the creative brief(s) for the SBCC campaign. The creative brief’s description of the priority audience, the promoted behavior and the key promise can be used to inform the pretest objectives.

Step 2: Choose the Pretest Method

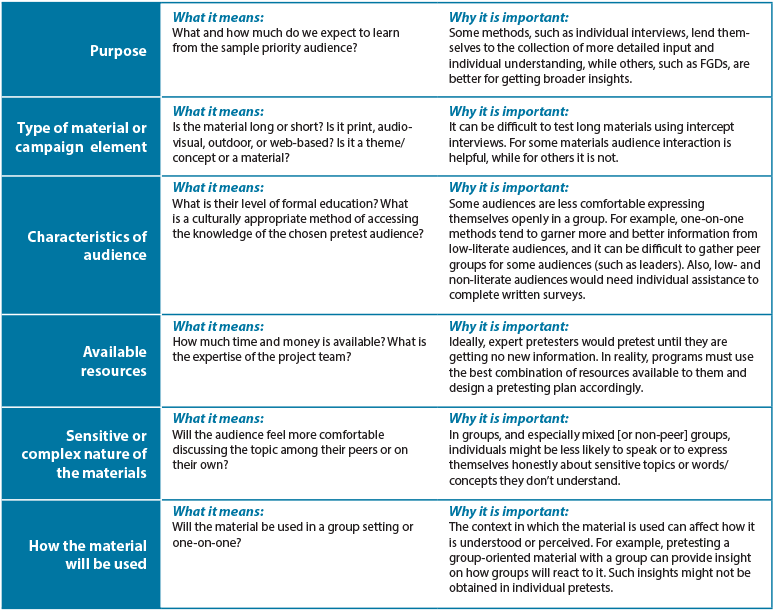

After the pretest objectives are established, select the pretest method. Choosing the right method(s), described in the table below, depends largely on the following:

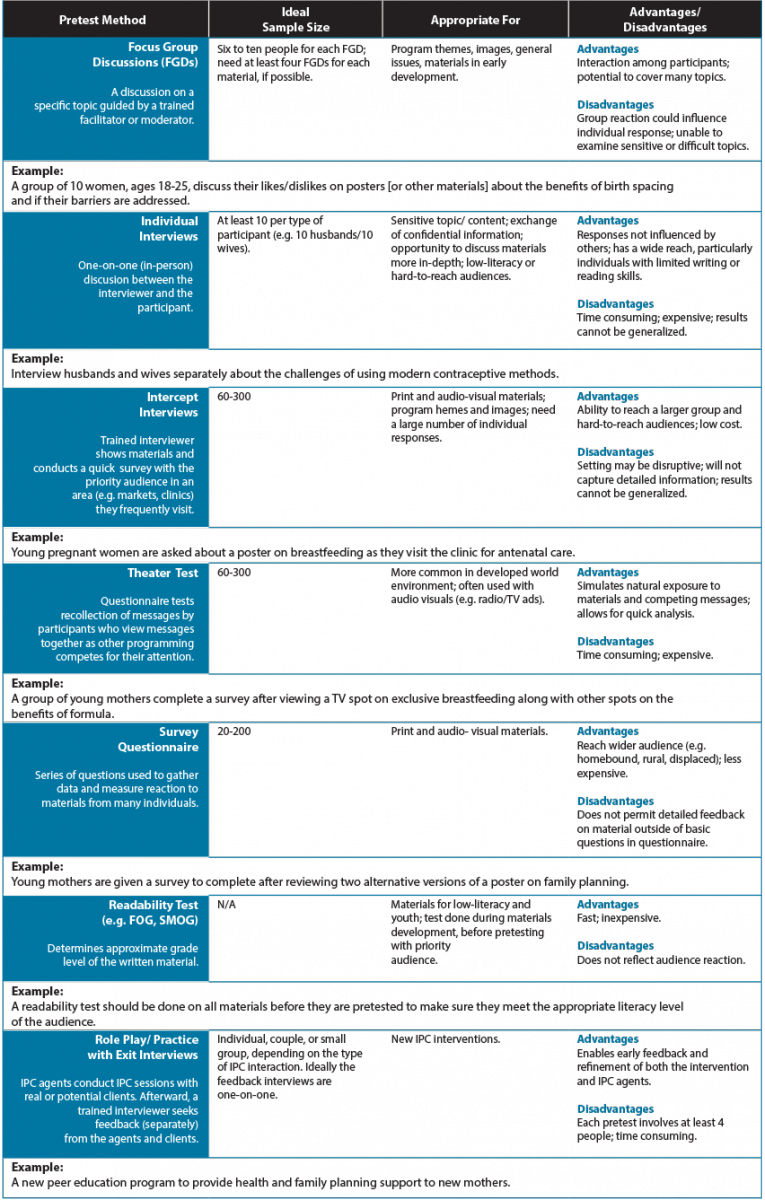

Review the table below for a list of pretesting methods. Keep in mind that using one method might limit the assessment. The use of mixed methods (e.g. survey questionnaire and in-depth interviews) is one way to capture additional information and fill gaps. Project teams should be able to articulate why they have chosen a certain method or methods for their pretest.

Step 3: Plan the Pretest

Plan the details of the pretest. This includes identifying the location and meeting site, recruiting participants, identifying facilitators and interviewers, determining incentives, and designing survey questionnaires or focus group discussion guides as needed. Below are some key points to keep in mind during this process:

Location:The priority audience should feel comfortable with the pretesting location. For example, it might be best to conduct the pretest in areas or places (e.g. clinics, churches) where the priority audience is most likely to encounter the materials.

Facilitators/Interviewers/Note-takers: For focus group discussions and in-depth interviews, make sure to identify trained or experienced moderators or facilitators. Trained facilitators can be found at universities, research firms, or partner organizations. If possible, use a facilitator who has similar characteristics (e.g. age, background) to the priority audience. This helps to develop trust and comfort among the participants. It is also important to have a trained note-taker who is familiar with the topic and speaks the local language.

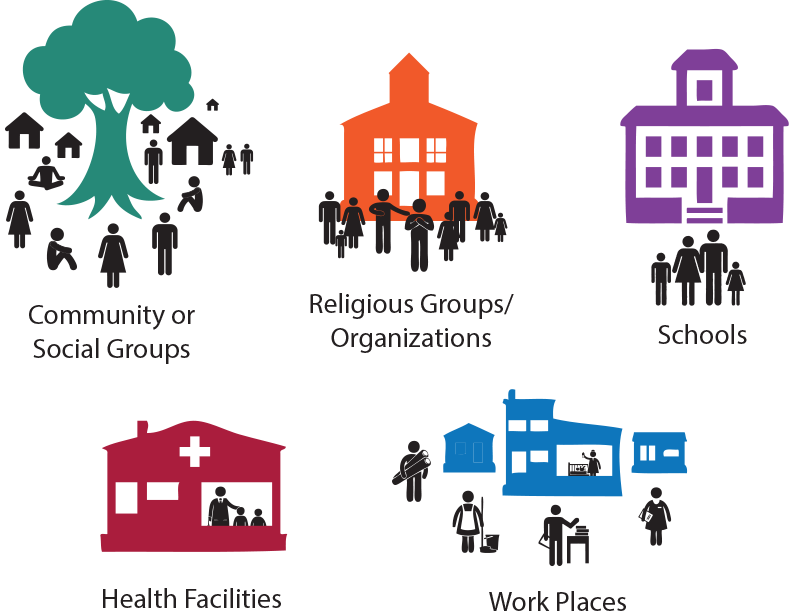

Participants: Use the creative brief to identify key characteristics of the priority audience. Select a sample of participants that match those characteristics to participate in the pretest. Participants should not have had any involvement in the development of your materials or concept testing. The sample size and collection method will depend on the selected pretest method (see Pretesting Methods Table in Step 2). It is often helpful to over-recruit participants in case some do not show up or complete the pretest. The image below provides some ideas on where to recruit participants. Some organizations have membership lists that can be used for recruitment.

Cost: Create a budget to reflect costs for the meeting site, travel/accommodation, equipment rental, facilitator/moderator’s time, copies of draft materials, stakeholder meetings and incentives. Thoughtful budgeting can help ensure all pretesting costs are accounted for.

Step 4: Develop Pretesting Guide

Develop a pretesting guide that will serve as a reference for keeping the activity on track (see How to Conduct an Effective Pretest for sample pretest questions). The guide should include the following:

- Background information from the creative brief (e.g. description of SBCC campaign and priority audience)

- Pretest objectives

- Pretest plan (description of pretest method, location, participants, facilitators/moderators/note-takers)

- Pretest questions

- Plan for use of information gathered

Step 5: Develop Questions

The goal of pretest questions is to understand the value of the materials. For example, how effective are the posters in influencing young parents to practice birth spacing? A series of open-ended questions will gather specific details about the audience’s preferences. Avoid close-ended (yes or no) questions or those that lead participants to respond in a certain way.

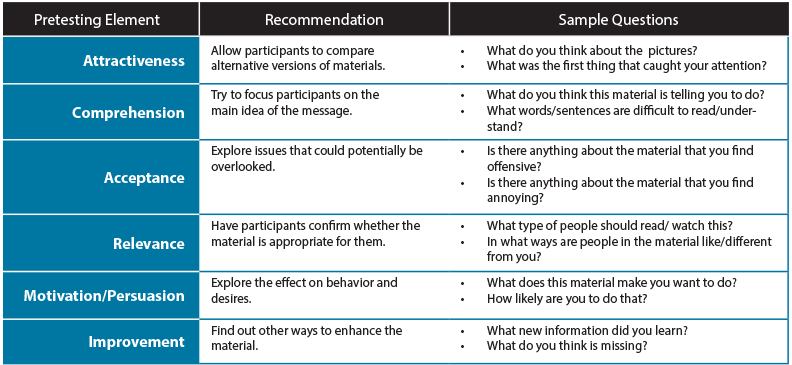

When developing questions, it is helpful to review the pretesting elements listed in the introduction. This will ensure questions are effective and meaningful (see example in table below). It is also important to include questions that will capture demographic information (e.g. age, education level, marital status, number of children) and details on how participants spend their day (e.g. media use, social gatherings). The program and creative teams should work together to contribute questions about behavior and design.

Salazar, 2008

Step 6: Conduct Pretest

Consent Forms: It is important to obtain participant consent (verbal or written) prior to the pretest. Consent forms are written agreements that show the individual has volunteered to participate in the activity. It also informs the participant of the risks involved (or clearly states there is no risk).

Recording and Note-taking: Some pretests use a self-administered questionnaire. When this is not the case, use a pretest answer sheet to note verbal and nonverbal responses to the material. This promotes consistency among interviewers and pretest sessions. Include on the data sheet the date, time, place, name and type of material, audience, respondent number, element (e.g. image, text, font, audio/video segment, character), pretest questions, and other relevant information as appropriate. Pretests can be recorded to help remind or clarify, but recording should not take the place of note-taking (see Resources Section).

The specifics on how to conduct a pretest will differ based on the method. The pretesting guide in the samples section outlines how to conduct a Focus Group Discussion (FGD) pretest. For details on how to conduct other types of pretests (see Resources Section).

With any type of pretest methods, it is important to use open-ended and probing questions to obtain rich information and avoid unduly influencing respondents.

Step 7: Analyze Data and Interpret Results

Analyze the data and interpret the results of the pretest. To analyze:

- Look for trends in responses. If a certain problem or change is mentioned multiple times, it is something that likely needs to be addressed.

- Determine whether results highlight fundamental flaws with the design, messages, or format. If so, the material may need to be completely redesigned. Otherwise, basic revisions should address the problems.

- Consult materials development experts about the suggested changes or problems highlighted. Do not feel compelled to make every change participants suggest.

Step 8: Summarize the Results

Communicate the results of the pretest. Write a report outlining the process and the findings. The report should have the following sections:

- Background: What was tested? What were the pretest objectives? Which audience was involved in the process? Why? How? How many participants were involved in the pretest?

- Highlights: Summarize the main points that came up during the testing.

- Findings: Present a complete report on the findings. Where appropriate, describe the participants’ reactions, incorporate key quotes and describe which creative ideas and concepts worked the best versus those that were not appealing or effective.

- Conclusions: Describe the patterns that came up and/or the major differences that were observed across the individuals and/or groups.

- Recommendations: Suggest and prioritize revisions for the tested creative ideas, concepts, and/or materials based on the findings and conclusions.

The results should be discussed among those involved with designing the messages, creating the materials and conducting the pretest. This includes program staff, designers, writers, editors, interviewers and note-takers (see Pretest Report Sample under samples).

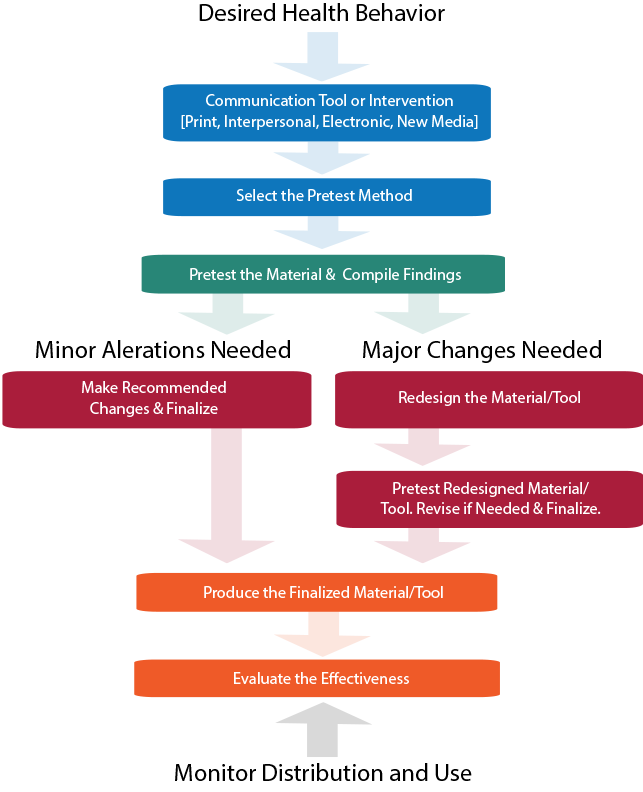

Step 9: Revise Materials and Retest

If the results of the pretest indicate that major revisions are needed, a complete redesign may be required. Once the materials have been revised, pretest the new version if budget and time allow. The same questionnaire or FGD guide can be used as before with questions added or changed as needed on the particular areas of concern. This is to make sure the problem from the first design is addressed in the newer version.

Samples

Making Health Communication Programs Work

Methodology for Pretesting Rock Point (RP) 256 Comic Book

Pretest Discussion Guide for VMMC [Kenya]

Sample Focus Group Discussion and In-Depth Interview Guides

Pretest Report of Triple S, ‘Sexy, Smart and Safe’ Health Promotion Campaign

Tips & Recommendations

- Pretest some options and alternate concepts if possible, not only one version of a material.

- Even if you are pretesting a draft and not a final draft, the draft should be as close to the final version as possible so that those reviewing it can judge it appropriately.

- Be open-minded about the outcome of the pretest. If you have already decided what you will find, you will only hear that and miss important insights.

- Don’t use convenience sampling. The group of people most convenient for you to gather may not best represent your priority audience for the material.

- Check with the donor and local government to see whether IRB approval is needed prior to pretest.

Lessons Learned

- Pretesting is the key to understanding how a priority audience will react and respond to SBCC messages and materials.

- Pretesting can save money, time and energy overall as the resultant material will be most suitable for the priority audience and will not run the risk of being inappropriate, misunderstood or rejected.

Glossary & Concepts

- Concept testing seeks feedback about general ideas, concepts and creative concepts; typically done before materials are developed.

- Field testing allows practitioners to observe whether the SBCC materials are used effectively in their intended settings and contexts, usually through observation and focus group discussions. It determines whether the material meets the intended purpose.

- FOG Readability Test measures the readability of writing by estimating how many years of formal education are needed to understand a text. The fog index is calculated by selecting a passage of text, determining the average sentence length, counting words with three or more syllables, adding the average sentence length and percentage of complex words, and multiplying by “4”.

- Incentives are small gifts of appreciation to participants. They might include cash, a snack (e.g. drink, tea, biscuits), phone credit, transport money or hygiene products (e.g. soap, toothbrush).

- Probing questions are follow-up questions that reiterate a participant’s comment and clarifies the comment while asking for further information. Some probes can be developed in advance.

- SMOG Readability Test is a measure of readability that estimates the years of education needed to understand a piece of writing. It is calculated by reading 30 sentences, counting words with more than 3 syllables, and using a formula.

- Stakeholders can include all those involved in or affected by the health or social issue, including public, private and NGO sector agencies, relevant Government Ministries, service delivery groups, audience members, advertising agencies, media and technical experts.

- Stakeholder reviews are input from technical experts, partners and decision-makers prior to finalizing materials. These reviews do not replace pretesting with the priority audience and can be done before or after pretesting.

Resources and References

Resources

Beyond the Brochure: Alternative Approaches to Effective Health Communication

Making Health Communication Programs Work

7 Tips for Conducting Intercept Surveys

Steps for Conducting Focus Groups or Individual In-depth Interviews

References

- Betrand, Jane T. 1978 Communications Pretesting, University of Chicago, Community and Family Study Center

- Brown, K., Linderger, J. & Bryant, C. (2008). Using pretesting to ensure your messages and materials are on strategy [Electronic version]. Health Promotion Practice, 9 (2), 116-122.

- C-Change (Communication for Change) (2012). C-Bulletins: Developing and Adapting Materials for Audiences with Lower Literacy Skills. Washington, DC: FHI 360/C-Change.

- Escalada, M. (2007). Pretesting and evaluation of communication materials.

- Escalada, Monina M. 1985 Pretesting Radio Dramas on Integrated Pest Management, Manila, Philippines

- Flanagan, Mahler and Cohen. How to Conduct Effective Pretests: Ensuring Meaninful BCC Messages and Materials, AIDSCAP Handbook

- Johns Hopkins Bloomberg School of Public Health. Fundamentals of program evaluation: Course 380.611. Communication pretesting, needs assessment (U.S.).

- Lozare, Benjamin V. et al. 2011. Leadership in Strategic Health Communication: Making a Difference in Infectious Disease and Reproductive Health. Johns Hopkins University Bloomberg School of Public Health, Center for Communication Programs

- Population Services International. Pretesting Toolkit.

- Population Services International. The effects of interview method on self-reported sexual behavior and perceptions of community norms in Botswana.

Banner Photo: © 2006 Basil Safi, Courtesy of Photoshare