Introduction

What is an indicator?

Indicators are tools used to measure Social Behavior Change Communication (SBCC) program progress. They are used to assess the state of a program by defining its characteristics or variables, and then tracking changes in those characteristics over time or between groups. Clear indicators are the basis of any effective monitoring and evaluation system.

Why are indicators necessary?

In order to track the way in which an SBCC program evolves and its progress towards reaching certain goals you need to be able to measure this change over time. Indicators provide data that can be measured to show changes in relevant SBCC program areas.

While partners in the community and key stakeholders will help design an SBCC program, it is ultimately the responsibility of the organization to assess its success and report results to the donor. Indicators are used to create targets that allow program staff to measure up-to-date characteristics of the program’s success and assess whether those results are in line with program expectations. The indicators themselves are vital to this process, as they are the key for successful tracking of program changes or problems.

As a tracking device indicators alert managers to any needed mid-course adjustments if it is found that the program is having unexpected difficulties or going off track. At the end of the program they are measured to validate the success and achievements of the intervention.

Who should develop indicators?

Indictors should be developed by the research staff in close collaboration with program staff and any government or NGO counterparts who are designing the program and have clear knowledge of the program goals and objective. Once agreed uppn, indicators give all parties, program managers and personnel, researchers and key stakeholders, a common framework against which to measure the progress and success of the program over time.

When should indicators be developed?

Indicators should be developed at the beginning of SBCC programs and can help researchers and program managers track program progress over the life of the program as well as measuring the results of the program at the end.

Who is this guide for?

This guide is designed primarily for program managers or personnel who are not trained researchers themselves but who need to understand the rationale and process of conducting research. This guide can help managers to support the need for research and ensure that research staff have adequate resources to conduct the research that is needed to be certain that the program is evidence based and that results can be tracked over time and measured at the end of the program.

Learning Objectives

After completing the steps in the indicators guide, the team will:

1. Explain how to create indicators

2. Identify when to use indicators

3. Know how to set baselines and targets using indicators

Prerequisites

How to Develop a Logic Model and/or How to Develop a Theory of Change

How to Develop a Monitoring and Evaluation Plan

Steps

Step 1: Identify What to Measure

The first step to creating program indicators for monitoring and evaluation is to determine which characteristics of the program are most important to track. A program will use many indicators to assess different types and levels of change that result from the intervention, like changes in certain health knowledge, attitudes, and behaviors among the priority audience(s). Referring to the program’s logic model can help to identify key program areas that need to be included in monitoring indicators.

Indicators fall under the three stages of the logic model, which include:

- Inputs – resources, contributions, and investments that go into a program

- Outputs – activities, services, events and products that reach the priority audience(s)

- Outcomes – results or changes for the priority audience(s)

Each stage of the logic model can use indicators to assess inputs, outputs, and outcomes. Process indicators consist of inputs as well as outputs and provide information about the scope and quality of activities implemented; these are considered monitoring indicators. Performance indicators include outcomes and are most commonly used to measure changes towards progress of results; these are considered evaluation indicators.

Step 2: Use the SMART Process to Develop High-Quality Indicators

One way to develop good indicators is to use the SMART criteria, as explained below. Consider each of these points when developing new indicators or revising old ones.

- Specific: The indicator should accurately describe what is intended to be measured, and should not include multiple measurements in one indicator.

- Measurable: Regardless of who uses the indicator, consistent results should be obtained and tracked under the same conditions.

- Attainable: Collecting data for the indicator should be simple, straightforward, and cost-effective.

- Relevant: The indicator should be closely connected with each respective input, output or outcome.

- Time-bound: The indicator should include a specific time frame.

| Implemented between 2008 and 2011 in Tanzania, the Fataki Campaign was designed to address the potential risk of HIV exposure in intergenerational relationships, through which older men offer young women financial or material goods in exchange for sex. This campaign included various mass-media and community-based activities. The monitoring and evaluation process for this campaign used multiple indicators to track the progress of the intervention, including ones used to track community discussions about Fatakis. One such indicator is used in the example below. Note how much the indicator improves through this SMART process. |

The example below uses the SMART approach to improve an indicator related to family planning.

| 1. What is the input/output/outcome being measured?Outcome: An increase in interpersonal communication about cross-generational sex as a result of the Fataki campaign |

| 2. What is the proposed indicator?Percent that have talked to someone about cross-generational sex. |

| 3. Is this indicator specific?It describes what people are talking about but does not specify the audience to be measured or who they are talking to. The indicator should include the percent of what population have talked to who? about cross-generational sex. |

| 4. Is this indicator measurable?Yes, but additional refinement would make it easier to replicate over time. Some participants may discuss cross-generational sex even if they were not exposed to the Fataki Campaign. A better way to assess this would be to change discussion about cross-generational sex to discussion about a “Fataki” message. Also, interpersonal communication implies a two-way discussion. Therefore, the indicator should include “discussed with” rather than “talked to.” |

| 5. Is the indicator attainable?This indicator is attainable because data for this indicator will be collected through a question in a larger, project-funded survey. |

| 6. Is the indicator relevant and related to the input/output/outcome being measured?This is directly related to the outcome as individuals who have talked to someone about cross-generational sex have likely participated either directly or indirectly in interpersonal communication about the campaign. |

| 7. Is this indicator time-bound?This indicator is implicitly time-bound, but not explicitly. The word “ever” or “in the last three months” should be added to clarify the time frame. |

| 8. Based on answers to the above questions, what is the revised proposed indicator?Percent of community members that have discussed a “Fataki” message in the last three months with another person in the community. |

Step 3: Establish a Reference Point

To show change or progress in a program, a reference point must be established. A reference point is a point before, during, or at the end of a program where indicators are used to establish the state of the program in terms of the audience’s knowledge, attitudes or behavior in order to provide a point of comparison as the program progresses. The reference point is often chosen before or at the start of a program to assess the progress of the program over time. At the same time, implementation timelines do not always allow for baseline data to be collected. In these cases reference points can be set up at other times in the program.

Depending on the stage of the intervention, a reference group can be established in one of several ways (see Figure 1 in Step 5):

| Intervention has not begun | Intervention has begun | Intervention is over |

|---|---|---|

| Establish the reference point immediately before it begins. This point is usually referred to as a baseline. | See if any data related to the program indicators were collected in other surveys targeting similar populations. For example, use data from large-scale national surveys like DHS. | A reference point can be established through a control group. Identify a sample group that has not been exposed to the intervention and is demographically, geographically, cuturally, and socially similar to the intervention group. Then administer data collection on program indicators with this group. |

| If comparable measurements in other surveys/programs cannot be found, use the program indicators to collect data on the current state of the program, even if it has already begun. |

For example, the Fataki campaign described earlier chose to establish a reference point through a control group, which was then compared to those who were exposed to the Fataki campaign. This method is an acceptable way of evaluating a program, although it creates complications when used for ongoing monitoring.

Step 4: Set Targets

Targets define the path and end destination of what a program hopes to achieve and is a number or percentage which will measure success. Once the reference point is established, determine what changes should be seen in the program’s indicators that would reflect progress towards success.

When establishing targets, consider:

- Baseline data or reference point: This sets a certain point in time in the program from which to observe change over time.

- Stakeholder’s expectations: Understanding the expectations of key stakeholders and partners can help set reasonable expectations for what can be achieved.

- Recent research findings: Do a literature search, if literature is available, for the latest findings about local conditions and the program sector, or conduct FGDs or IDIs in order to set realistic targets.

- Accomplishments of similar programs: Identify relevant information on similar programs that have been implemented under comparable conditions. Those with a reputation for high performance can often provide critical input on setting targets.

The table below provides an example of how to visually organize inputs/outputs/outcomes, indicators, reference points and targets, using the same Fataki campaign described earlier. Making a table like the one below can provide a method for tracking the progress of the program and understanding how each indicator, reference point, and target fits with the logic model.

| Input/Output/Outcome | Indicator | Reference point | Target |

|---|---|---|---|

| Output: An increase in interpersonal communication about cross-generational sex as a result of the Fataki campaign. | Percent of community members that have ever discussed a “Fataki” message with another person in the community. | Among those not exposed to the Fataki campaign, 0% have discussed a “Fataki” message with someone. | Among those who have been exposed to the Fataki campaign, 65% have discussed a “Fataki” message with someone. |

Step 5: Determine the Frequency of Data Collection

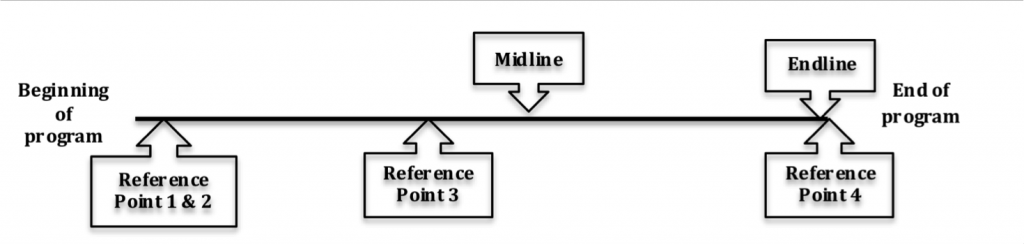

As a last step, consider how often data should be collected in order to properly track the program’s progress. These designated points in time are usually referred to as benchmarks. Ideally, at least one round of data collection should occur between the reference point and the end of the program. If the data are collected at the midpoint of the program, it is called a midline. If data are collected at the end of the program, it is called an endline (see Figure 1). In the Fataki example, only endline data was collected. The frequency of collecting data is mostly dependent on the cost and length of the program — longer programs, or those with more funding, can typically collect comprehensive data more frequently than shorter programs or those with less funding.

Figure 1

Conclusion

Proper indicators are crucial to any program as they provide data needed to track program progress. By closely tracking the progress of a program, any problems can be quickly identified and addressed. Being able to address problems in a timely manner can help improve programs and ensure better results. Better results allows for informed progress reports grounded in evidence, which help prove the effectiveness of a program to current and future funders.

In order to make the most out of indicators, they should be “SMART” (Specific, Measurable, Attainable, Relevant, and Time-Bound) and establish a point of reference, targets, and frequency of data collection for effective program monitoring and evaluation.

Templates

Developing Indicators: A SMART Criteria Checklist

Tips & Recommendations

- Remember no indicator will meet all of the SMART criteria equally. Use discretion in determining what will provide a balance between validity and practicality.

- Although the interests of stakeholders are critical to selecting proper indicators, this does not mean that indicators must be created to capture every stakeholder concern. The managers of the program must use their best judgment to include stakeholder interests where possible and appropriate.

Glossary & Concepts

Inputs include the resources, contributions, and investments that go into a program

Outputs are the activities, services, events and products that reach the program’s primary audience

Outcomes are the results or changes related to the program’s intervention that are experienced by the primary audience

Process indicators provide information about the scope and quality of activities implemented, and consist of inputs as well as outputs; these are considered monitoring indicators.

Performance indicators are most commonly used to measure changes towards progress of results, and include outcomes; these are considered evaluation indicators.

Reference point is a point before, during, or at the end of a program where indicators are used to establish program characteristics in order to provide a point of comparison as the program progresses.

Targets are pre-established goals that are set for the program.

Benchmarks are designated points in time in which data are collected to track the program’s progress

Midline refers to data collected at the mid point of a program

Endline refers to data collected at the end of a program

Resources and References

References

UNAIDS. Monitoring and Evaluating Fundamentals. An Introduction to Indicators.

UNDP. Selecting Indicators for Impact Evaluation

Banner Photo: © 2007 Bonnie Gillespie, Courtesy of Photoshare